Case Studies: mikai Inc.

Content that brings virtual performers into real spaces,

created using a FreeD*-compatible PTZ camera system

ideal for AR video production

* FreeD is a widely used protocol for transmitting camera tracking information in AR/VR systems. The AW-UE150W/K and the AW-UE100W/K transmit the pan, tilt, zoom and focus information that are required for compositing with AR/VR.

― Yuya Karasawa, 3DCG Director, mikai inc.

Needs

- Provide fans with new experiences by enabling virtual performers to appear in real studios through high-quality, cost-effective video production

Solutions

- Utilize a PTZ camera system with FreeD support to create high-quality AR video content while ensuring cost-effectiveness

Background

Revolutionary concert experiences that combine virtual performers with real spaces

Part of mikai inc., Re:AcT is an agency for virtual talent. Two of the agency's performers, Kyo Hanabasami and Leona Shishigami, performed in a live-streamed concert entitled Re:al on June 20, 2021. This event was filmed and streamed from BOXSTUDIO in BLACKBOX3, a dedicated streaming studio operated by THECOO, Inc., and featured a band of real musicians alongside their virtual co-stars with the studio's four permanently-installed LED panels in the background. A Panasonic PTZ camera system suitable for live AR production was used to film video of the studio, into which the virtual performers were inserted.

Why choose Panasonic products?

High-quality video content without compromising on cost

The concept behind taking mikai's online performances from their existing virtual performance spaces into real venues was intended to create an enhanced user experience. The use of AR was not under active consideration during the initial planning, however, the fact that Panasonic's 4K Integrated Camera AW-UE100K and 4K Integrated Camera AW-UE150K, which are well-suited to AR video production through their FreeD compatibility, are permanently installed in the studio led to the adoption of a method for enhancing the concert experience by compositing virtual performers into a real studio. In order to achieve this, a unique system for the transmission of FreeD to Unity* was developed. the 3DCG rendering platform used to create the virtual performers. Using these methods, a system for real-time collaboration between virtual performers and real spaces was created.

* Unity is a 2D, 3D and VR game development platform developed and sold by Unity Technologies.

Benefits of this system

A compact, cost-effective system for high-quality AR production

The streaming of concerts that combine real spaces with virtual performers was a possibility that mikai had previously considered. However, the creation of high-quality AR content that blends into real environments through tracking systems that obtain information through conventional cameras necessitates large-scale systems similar to those used by broadcasters, meaning that cost proved to be a major challenge. For this project, the use of FreeD-compatible PTZ cameras enabled the transmission of the camera information required for AR through a compact, cost effective system. This lowered the barriers to the introduction of AR content production without compromising on quality, creating an event that offered an enhanced viewer experience.

Kyo Hanabasami and Leona Shishigami performing at their live streaming event entitled Re:al, appearing together with a live band in front of the studio's four LED panels

Video of the performance taken by the AW-UE100K before the addition of the 3DCG virtual performers

Transmission of camera information using FreeD contributes to the creation of realistic video performances

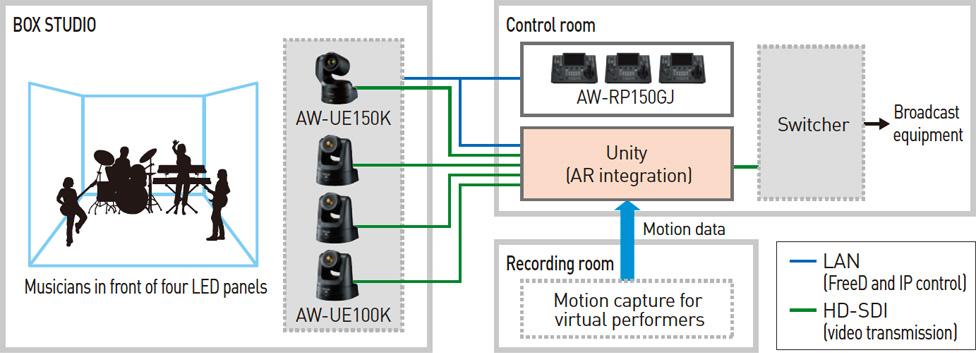

Filming is performed using three 4K Integrated Camera AW-UE100Ks installed as PTZ cameras at the front of the studio together with a single 4K Integrated Camera AW-UE150K in the ceiling. These cameras are compatible with FreeD, enabling them to transmit pan, tilt, zoom and focus information to Unity using IP. The 3DCG rendering of the virtual performers was carried out based on the camera information. This rendering was then inserted into video from the studio in real time to achieve highly-accurate synchronization of positioning information. According to mikai 3DCG director Yuya Karasawa, "When the positioning information for the video from the studio and the virtual performer is not synchronized correctly, the final image feels like a composite. Utilizing the information sent from the AW-UE100K and AW-UE150K using FreeD eliminated this issue almost entirely. As a result, it felt like the two virtual performers were really there alongside the band."

Three AW-UE100K installed at the front of the studio to enable a range of camera work through pan, tilt and zoom operations

An AW-UE150K installed in the studio ceiling, enabling the creation of dynamic camera angles

Dynamic camera work and high image quality suited to AR video production presents new performance possibilities

The PTZ cameras are operated using IP control from three Remote Camera Controller AW-RP150GJs in the control room, which is connected to the studio. This enables the creation of dynamic camera work using four cameras operated by a small crew, even when space in the studio is limited. The video from the studio must also be high quality in order to enable combination with the 3DCG of the virtual performers. According to Yuya Karasawa, "In order to create a consistent look, any noise in the video image must also be applied to the 3DCG wherever possible. The low-noise, high resolution video provided by Panasonic's PTZ cameras meant that this was not necessary. The system expanded the possibilities for performances and provided image quality that was great to work with."

AW-RP150GJ installed in the control room

Three AW-RP150GJ used to operate four studio cameras

System: Shooting system for live AR events

Future prospects

A wealth of new possibilities for virtual performers in real-world spaces

In addition to streaming, the creation of music videos and other video content offers future possibilities for professionals who work with singing to create content that excites viewers. Through Panasonic's PTZ camera systems, the entire real world can be used as video material for AR, expanding the range of creative possibilities through working with virtual performers in physical locations.

mikai inc.

Takahiro Uemura (left)

CEO

Kazunori Takaichi (center)

General Director, Management Planning Department, Re:AcT Head Office

Yuya Karasawa (right)

3DCG Director, Research & Development

* Job titles at the time of the interview.

An agency home to unique virtual talent

Re:AcT was launched by mikai inc. as an agency for virtual performers, with a mission statement of "bringing excitement to the world." The agency is home to a variety of unique talent such as Kyo Hanabasami and Leona Shishigami, performers with more than 200,000 YouTube followers each (as of June 2021).

- Homepage of mikai inc. : https://mikai.co.jp/

- Custom URL for live concert: https://v-react.com/live-real/

The logo for Re:al, a concert by Kyo Hanabasami and Leona Shishigami

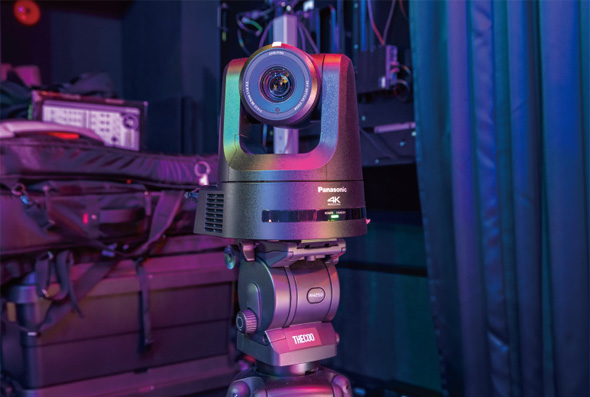

BLACKBOX3, a dedicated streaming studio managed by THECOO, Inc.

- Studio website: https://blackboxxx.jp/

Production using the four LED panels permanently installed in BOXSTUDIO in BLACKBOX3

Equipment Installed

Location